Teams rarely struggle because they do not have access to enough SEO tools. More often, they struggle because they have too many, trust the wrong outputs, or build workflows around reports instead of decisions. In practical SEO work, that gap is expensive. It delays fixes, distorts priorities, and creates confidence where caution would be healthier.

This article takes a case-study approach, not by inventing dramatic wins or losses, but by looking at recurring audit patterns that appear across content programs, technical cleanups, migrations, ecommerce sites, and local SEO operations. The names change. The software stack changes. The mistakes repeat.

If you want better outcomes from SEO tools, the goal is not simply to buy more subscriptions or consolidate everything into one dashboard. The goal is to understand what each tool is actually good at, where its blind spots begin, and how the output should be translated into action.

Below are ten costly mistakes to avoid, along with the operating habits that separate useful SEO tools from expensive noise.

Why SEO tools still create bad decisions

SEO tools are models of search behavior, site structure, and competitive visibility. They are not search engines, analytics platforms, or customers. That distinction matters.

A crawler can show you a broken internal linking pattern, but it cannot decide whether fixing it belongs ahead of revenue-critical template issues. A rank tracking platform can reveal movement, but it cannot tell you whether the affected keywords matter to pipeline, product discovery, or qualified traffic. A backlink analysis report can list domains, but it cannot replace editorial judgment about relevance and risk.

In other words, SEO tools become valuable when they support a clear operating system:

- What question are we trying to answer?

- Which source is closest to the truth?

- What action follows from this insight?

- How will we measure whether the action helped?

Without that discipline, even a strong stack can lead a team into weak execution.

Mistake 1: Buying SEO tools before defining the workflow

One of the most common audit patterns is a team that bought SEO tools by category rather than by job to be done. They subscribed to one platform for rank tracking, another for a site audit, another for keyword research tools, another for backlink analysis, and still another for dashboards. None of those choices is inherently wrong. The problem is that no one designed the workflow connecting them.

That usually creates three issues. First, the same metrics appear in multiple places with slightly different methodologies. Second, responsibility becomes blurry because several people can “see” the issue but no one owns the fix. Third, reporting grows faster than execution.

A better approach is to map your workflow first:

- Research

- Opportunity sizing

- Technical validation

- Implementation

- Measurement

- Executive reporting

Then choose SEO tools based on which part of the chain they strengthen. If a tool does not clearly improve one of those steps, it is probably overlap.

Mistake 2: Treating crawl data as ground truth

Site audit platforms are essential, but they are not a perfect mirror of how search engines process your site. A crawler sees what its configuration, permissions, rendering behavior, and crawl budget allow it to see. That is useful, but not absolute.

In audit-led case work, this mistake shows up when teams react to thousands of flagged issues without verifying whether the issue affects indexation, ranking signals, or user experience. They chase low-value warnings while bigger problems remain untouched.

Examples include:

- Obsessing over minor meta description duplication while important pages are not discoverable through internal links

- Fixing a long list of redirected URLs that are not part of current navigation while canonicals are misaligned on money pages

- Prioritizing generic page speed recommendations without checking rendering or template-specific bottlenecks

The correction is simple: use crawl data as a starting point, not a verdict. Validate critical findings against indexation signals, templates, logs where available, search performance, and actual business importance.

Mistake 3: Using keyword data without search intent review

Keyword research tools are powerful, but they can easily push a content strategy toward volume over fit. A term may look attractive in a database and still be the wrong target for your site, your page type, or your commercial model.

This mistake appears constantly in case-study reviews of content programs. Teams build pages because a keyword has visible demand, then wonder why rankings stall or conversions disappoint. The answer is often intent mismatch.

Before acting on keyword data, ask:

- Is the dominant result set informational, commercial, local, transactional, or mixed?

- Does your page format match what currently earns visibility?

- Can your brand credibly satisfy the query better than what already ranks?

- Is this keyword top-of-funnel research, product comparison, or ready-to-buy demand?

SEO tools can suggest opportunities. They cannot replace manual review of the result page. That review is where intent becomes obvious, and where costly content mistakes are often prevented.

Mistake 4: Reporting rankings without business context

Rank tracking is useful, but isolated ranking movement is a weak management language. If a reporting process focuses too heavily on average positions or a keyword basket without tying those metrics to page type, funnel stage, or commercial importance, leadership gets a distorted picture.

For example, a team may celebrate ranking gains on blog terms while product or service pages lose visibility for high-intent queries. Or they may panic over fluctuations on volatile phrases that were never expected to convert.

Strong SEO reporting translates rank tracking into business relevance. That means segmenting by things such as:

- Brand vs non-brand

- Informational vs commercial

- Core revenue pages vs supporting content

- Local vs national intent

- Device or market differences

When rankings are reported in those clusters, the story becomes clearer. Rankings are not the outcome. They are one input into search visibility, qualified traffic, and revenue opportunity.

Mistake 5: Letting dashboards hide indexing and rendering problems

Dashboards make SEO feel controlled. That is part of their appeal and part of their danger. A polished dashboard can create the impression that a site is healthy because the trend lines look stable, even when serious indexation or rendering problems are developing underneath.

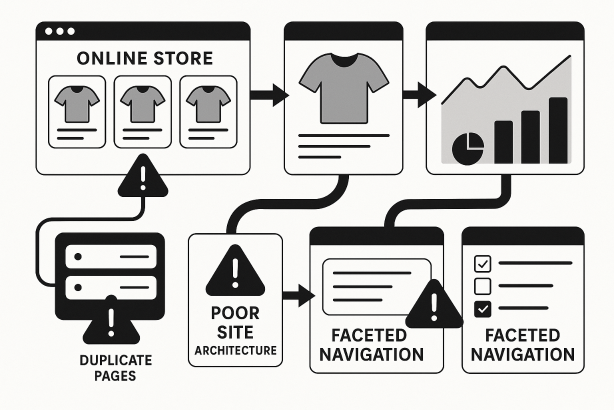

This often happens after template releases, CMS changes, faceted navigation expansions, JavaScript changes, or migrations. The summary charts remain calm while key pages fall out of discoverable pathways or render inconsistently.

To avoid this mistake, make sure your workflow includes direct checks for:

- Indexability on important templates

- Canonical accuracy

- Internal link accessibility

- Rendered content visibility

- Parameter handling and duplicate URL patterns

- Changes in XML sitemap integrity

The lesson is straightforward: dashboards are useful for monitoring, but they should never replace page-level investigation during a technical SEO audit.

Mistake 6: Trusting backlink metrics without reviewing relevance

Backlink analysis platforms can be excellent for discovery, but authority scores and similar metrics are only rough proxies. They are not enough on their own to judge link quality, competitive strength, or outreach opportunity.

In repeated audit patterns, this mistake appears in two forms. The first is overvaluing links because the metric looks strong, even though the referring page is irrelevant, buried, or clearly low-value. The second is dismissing useful links because the metric appears modest, even though the site is contextually aligned and editorially credible.

A stronger review process looks at:

- Topical relevance

- Placement context

- Editorial nature of the mention

- Traffic potential and audience fit

- Link profile patterns, not just single-link snapshots

Backlink analysis should help you ask better questions. It should not reduce link evaluation to one score column.

Mistake 7: Using one tool to validate another tool

Another common problem is circular validation. A team sees an issue in one platform, checks a second platform that uses similar assumptions, and treats the agreement as proof. In reality, both tools may be repeating the same limitation.

This is especially risky with estimated traffic, keyword gaps, site health scores, and visibility calculations. These metrics can be useful directional signals, but they are still estimates. If the business decision is important, estimate-based agreement is not enough.

The better habit is source hierarchy:

- First-party performance data for actual outcomes

- Search engine signals where available for visibility and indexing confirmation

- SEO tools for discovery, pattern recognition, and competitive estimation

When those layers disagree, do not average them mentally. Investigate the disagreement. That is often where the most important insight lives.

Mistake 8: Failing to set baselines before making changes

Teams often deploy technical fixes, content updates, internal linking changes, or template revisions without recording a clean baseline first. Then, when performance moves, nobody can separate correlation from impact.

This is one of the most avoidable SEO tools mistakes, because the tools themselves usually make baselining possible. What is missing is process discipline.

Before major changes, capture:

- Current rankings for affected keyword groups

- Organic landing pages and search visibility patterns

- Indexation status

- Internal linking depth for impacted pages

- Current metadata, canonicals, and template conditions

- Traffic and conversion trends by page group

That baseline turns later analysis into something credible. Without it, teams rely on memory, isolated screenshots, or assumptions shaped by recent events.

Mistake 9: Ignoring market, location, and device variation

SEO tools often encourage a simplified view of rankings and opportunities. But search behavior changes by geography, device, language nuance, and local intent. If you track only one default location or one generalized dataset, your picture of search visibility can be badly incomplete.

This matters especially for local businesses, multi-location brands, international sites, and industries where mobile behavior differs sharply from desktop research. A keyword that looks stable in a generic report may behave very differently in the actual market that matters to you.

In case-study style reviews, this mistake commonly leads to misguided content priorities or false confidence about competitive position. The fix is to set up segmentation that reflects reality:

- Primary markets

- Core locations

- Device categories

- Language or regional variants

- Store or service-area differences where relevant

SEO tools are only as good as the market configuration behind them.

Mistake 10: Automating alerts and under-prioritizing fixes

Alerts feel proactive. In practice, too many teams automate notifications without designing a response model. The result is familiar: inboxes fill with technical warnings, nobody can tell what matters, and real issues compete with harmless churn.

Good monitoring is not about receiving the highest volume of alerts. It is about defining which alerts deserve immediate action, who owns them, and what threshold triggers escalation.

A practical way to prioritize is to classify issues by:

- Revenue impact

- Template scope

- Indexation risk

- User experience severity

- Ease of implementation

- Likelihood of recurring after deployment

The strongest SEO teams do not just monitor more. They triage better.

Case-study patterns: what these SEO tools mistakes usually look like

| Mistake | What teams usually see | What is really happening | Better move |

|---|---|---|---|

| Too many overlapping platforms | Large reporting packs, slow decisions | No workflow ownership between tools | Map tools to clear operational jobs |

| Overreliance on crawl warnings | Long fix lists, little measurable progress | Low-impact issues are outranking important ones | Validate against business-critical templates and search signals |

| Keyword-first content production | Pages published but not gaining traction | Search intent and page type do not match | Review result pages before assigning content |

| Ranking-only reporting | Leadership gets mixed signals | Visibility is disconnected from commercial value | Report by page type, intent, and funnel stage |

| Metric-led link review | Confusing outreach and weak link decisions | Authority proxies are replacing editorial judgment | Assess relevance, context, and pattern quality |

| No baselines before changes | Unclear impact after launches | There is no reliable before-and-after frame | Capture rankings, indexing, template state, and landing pages first |

How to evaluate SEO tools without repeating the same errors

If you are reviewing your current stack, ask five practical questions before renewing or adding anything:

- Does this tool answer a question we cannot answer reliably today?

- What decision becomes faster or better because we have it?

- What first-party or search-engine source do we use to validate it?

- Who owns the output?

- What existing tool or process does it replace, simplify, or sharpen?

Those questions sound simple, but they quickly expose whether a platform is strategic, redundant, or mostly decorative.

A practical stack mindset: choose by job, not by logo

Most teams do not need the biggest stack. They need the clearest one. In broad terms, useful SEO tools usually fall into a handful of jobs:

- Discovery: uncover keyword themes, competitive gaps, and content opportunities

- Technical diagnosis: support site audit work, crawl analysis, and template review

- Measurement: monitor rank tracking, landing page movement, and organic trends

- Communication: turn findings into SEO reporting that stakeholders can act on

Once you organize your thinking that way, it becomes easier to see whether your stack is balanced. Some teams overspend on discovery and underinvest in diagnosis. Others have strong reporting but weak execution data. The point is not tool minimalism for its own sake. The point is operational clarity.

Before you add another platform, audit the process

Many teams try to solve an execution problem with another subscription. Usually, the bigger need is a process audit. Are your core pages segmented correctly? Are keyword targets mapped to the right page types? Do technical issues have clear owners? Are dashboards highlighting what matters commercially, not just what is easy to chart?

If your current stack is producing more noise than direction, Rabbit SEO can help you simplify the workflow, tighten your reporting, and focus on the fixes that actually matter. Before you buy another platform, review where your current process is leaking value.

Conclusion: the best SEO tools still need judgment

Great SEO tools can save time, reveal blind spots, and sharpen strategy. But they do not remove the need for prioritization, validation, and context. The repeated mistakes above are not technology problems. They are operating problems.

If you want more value from SEO tools, start with the questions, ownership, and validation rules that sit around them. Choose tools by task. Check estimates against reality. Segment reporting by business importance. Treat dashboards as support systems, not substitutes for investigation.

Do that well, and your SEO tools become what they should have been from the start: not a collection of subscriptions, but a dependable decision-making system.