SEO tools are essential in technical SEO, but they are also one of the easiest ways to waste time, misread priorities, and send teams chasing the wrong fixes. A crawler, site auditor, or monitoring platform can reveal issues at scale. It can also overwhelm you with warnings that look urgent but do little to improve crawling, indexing, rankings, or conversions.

The problem is rarely the software itself. The problem is how teams use it. In technical SEO, context matters more than dashboards. A thousand flagged URLs do not automatically mean a thousand problems, and a perfect health score does not guarantee search visibility. If you want better outcomes from SEO tools, avoid the mistakes below and build a workflow that turns raw findings into clear decisions.

Why SEO tools often go wrong in technical SEO

Technical SEO sits at the intersection of crawling, rendering, indexing, site architecture, and deployment. That means tool data is always partial. One crawler may show blocked resources. Another may emphasize duplicate content. Google Search Console may surface indexation issues that a third-party platform cannot fully explain. None of these views is complete on its own.

The biggest mistake is treating tool output as final truth rather than evidence to investigate. The best technical SEO work happens when you combine SEO tools with manual review, server-side understanding, and business priorities.

| Mistake | Why it hurts | What to do instead |

|---|---|---|

| Treating every alert as urgent | Creates noise and drains development time | Prioritize issues by impact on crawling, indexing, and key pages |

| Relying on one data source | Misses important context | Compare crawler data with Google Search Console and real page checks |

| Using default crawl settings blindly | Produces incomplete or misleading audits | Set crawl scope, user agents, rendering, and exclusions intentionally |

| Chasing scores over outcomes | Improves dashboards without improving search performance | Focus on indexation, crawl paths, internal linking, and critical templates |

| Ignoring technical context | Misdiagnoses canonicals, redirects, and rendering issues | Review templates, page types, and search intent behind the URLs |

| Failing to validate fixes | Leaves unresolved issues after release | Re-crawl, inspect URLs, and confirm the intended result in production |

1. Treating every issue in SEO tools as equally important

Most platforms are designed to surface patterns, not to tell you what matters most to your site. That distinction matters. A broken canonical on a high-value template is not equal to a missing image alt attribute buried on archived pages. A redirect chain affecting core category pages deserves more attention than a handful of noindex URLs that were intentionally excluded.

When teams act on every warning in the order it appears, technical SEO becomes reactive. Development resources get spent on low-impact cleanup while real blockers remain unresolved. A smart prioritization model should ask:

- Does this issue affect crawlability or indexation?

- Does it impact revenue-driving or strategically important pages?

- Is it sitewide, template-level, or isolated?

- Can Google interpret the page correctly despite the warning?

Your technical SEO audit should produce a ranked action list, not a flat export of tool alerts.

2. Trusting a single crawler or platform

No single view tells the whole story. Third-party crawlers are excellent for discovering URL patterns, internal linking gaps, redirect chains, and duplicate signals. But they do not replace Google Search Console, log analysis, or direct inspection of live pages. If you rely on one platform alone, you risk solving what your crawler sees instead of what search engines actually experience.

This is especially important for indexation issues. A tool may report that a page is indexable because it returns a 200 status and lacks a noindex tag. Google may still ignore it due to duplication, weak internal linking, or poor canonical signals. The reverse also happens: a crawler flags a URL that looks problematic, but the page is behaving exactly as intended.

Use multiple inputs before escalating a fix. Compare:

- Site crawl findings

- Search Console coverage and indexing signals

- Manual URL checks on live pages

- Template-level behavior across page groups

3. Running a technical SEO audit with default settings

Default settings are convenient, not comprehensive. A crawler that is not configured for your site can miss large sections of content, misread JavaScript-heavy pages, or overemphasize irrelevant environments. That leads to audits that look precise but are built on the wrong scope.

Before you run a technical SEO audit, define what the crawl should and should not include. Consider whether you need to render JavaScript, whether parameters should be excluded, whether subdomains belong in scope, and whether staging or faceted navigation should be filtered out. Also decide which user agent matters for the job you are doing.

This matters for large sites in particular. On an enterprise site, a poorly configured site crawl can bury meaningful findings under thousands of low-value URLs. On a smaller site, the same mistake can hide orphan pages, bad canonicals, or internal linking weaknesses because the crawl never reached the right areas in the first place.

4. Using SEO tools to chase scores instead of technical outcomes

Health scores can be useful for spotting trends, but they are not an SEO strategy. Teams often become fixated on pushing a score upward without asking whether the changes improve crawl efficiency, reduce ambiguity, or strengthen important pages. That is a dangerous way to manage technical SEO.

A score may rise after resolving minor warnings, while core problems remain untouched. For example, you can clean up metadata inconsistencies and still have major issues with pagination, broken canonicals, or weak internal linking to strategic pages. You can also improve a page speed report and still fail to get key URLs indexed if the site architecture is weak.

The better approach is outcome-based. Use SEO tools to answer practical questions:

- Can search engines discover the right pages efficiently?

- Are canonical tags aligned with the URLs you want indexed?

- Are redirects clean and purposeful?

- Are important pages receiving enough internal links?

- Is crawl budget being wasted on low-value URL patterns?

When those answers improve, the score matters less.

5. Ignoring technical context around templates, canonicals, and internal linking

Technical SEO problems rarely exist in isolation. A canonical issue may be caused by a template rule. A duplicate cluster may come from faceted navigation. Weak rankings on important pages may be tied less to metadata than to shallow internal linking or poor crawl paths. If you review pages one by one without understanding the broader architecture, your fixes will be inconsistent.

Pay close attention to page types. Product pages, category pages, blog archives, location pages, and filtered views often behave differently for good reasons. Your job is not to force uniformity. It is to make sure each template sends clear, consistent signals.

In practice, that means checking whether:

- Canonical tags point to the correct self-referencing or preferred URLs

- Redirects support the intended canonical version

- Important templates are reachable within a reasonable click depth

- Navigation and contextual links reinforce priority pages

- Low-value parameter URLs are controlled appropriately

This is where technical SEO becomes strategic. Tools may reveal symptoms, but template logic explains the cause.

6. Forgetting to validate fixes after deployment

One of the most expensive mistakes with SEO tools happens after the work is supposedly done. A recommendation gets approved, development implements it, and everyone moves on without verifying the live result. But technical fixes often change during deployment. Rules can be applied too broadly, tags can be overwritten by another system, and redirects can conflict with existing logic.

Always validate in production. Re-run the relevant crawl, inspect representative URLs manually, and confirm that the issue is fixed at the template level where appropriate. For larger changes, monitor Search Console over time to see whether coverage, indexing, or discovery patterns change in the expected direction.

Technical SEO is not complete when the ticket is closed. It is complete when the live site behaves as intended and the signals hold after release.

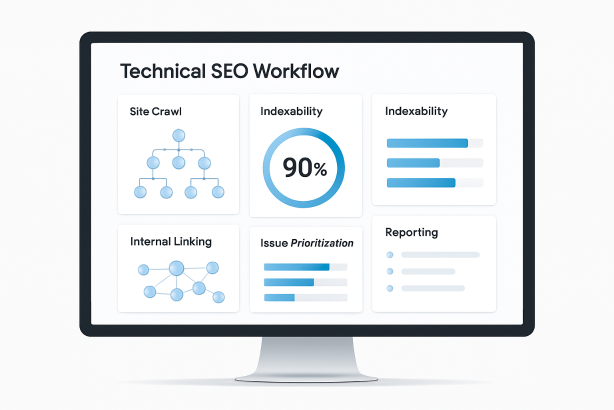

How to use SEO tools more effectively in technical SEO

Start with business-critical sections

Audit the pages that matter most: core categories, primary service pages, high-intent templates, and the sections that drive qualified traffic. That keeps your analysis commercial, not just technical.

Group issues by template and impact

Do not hand developers a spreadsheet full of individual URLs unless the problem is truly isolated. Group findings into template-level issues and explain the likely cause, affected page types, and expected SEO benefit.

Use a validation loop

Find the issue, confirm it with another source, recommend the fix, validate the release, then monitor the result. This loop prevents false positives and reduces wasted implementation time.

Protect crawl budget and index quality

For larger sites, keep a close eye on crawl budget, duplicate paths, and non-essential URL generation. The best SEO tools help you see where search engines may be spending attention on pages that should never compete for visibility.

Turn SEO tools into action with Rabbit SEO

If your team is collecting technical SEO data but struggling to turn it into clear priorities, Rabbit SEO can help you focus on what matters. Use a more disciplined workflow to spot issues, monitor important pages, and act on technical findings before they become bigger visibility problems.

Explore Rabbit SEO to simplify your audits, organize priorities, and keep your technical SEO efforts tied to real site performance.

Conclusion: SEO tools work best when your process is stronger than the dashboard

SEO tools are indispensable, but they are only as useful as the judgment behind them. In technical SEO, the biggest mistakes are not about owning the wrong platform. They are about poor prioritization, weak validation, and a lack of context. If you avoid those mistakes, your audits become sharper, your fixes become more effective, and your technical SEO work starts producing measurable value instead of endless noise.