Choosing the right technical SEO tools is less about finding one perfect platform and more about matching software to your site size, team structure, and diagnostic needs. Most businesses do not rely on a single product. They use a stack: a website crawler for discovery, Google data for validation, and sometimes deeper crawl analysis tools or log-based reporting when scale becomes a real constraint.

That is why many buying guides fall short. They compare feature lists as if every company has the same workflow, the same stakeholders, and the same tolerance for manual analysis. This commercial comparison takes a more practical route. Instead of generic rankings, it looks at how different technical SEO tools fit common real-world buying scenarios: lean in-house teams, agencies, content-heavy publishers, large ecommerce operations, and enterprise environments where monitoring, collaboration, and governance matter just as much as the crawl itself.

If your goal is to shortlist better, avoid overlapping subscriptions, and choose tools that your team will actually use, this is the comparison that matters.

How to compare technical SEO tools before you buy

Before looking at specific products, define what you need the tool to do every week, not just during a quarterly audit. The best technical SEO audit tools are the ones that fit your operating model.

- Crawl depth and control: Can you control user agents, rendering, exclusions, extraction, segmentation, and custom reporting? A serious website crawler should help you diagnose, not just surface a generic health score.

- Scale: Desktop tools can be excellent for small and mid-sized sites, but large catalogs and publisher archives often need cloud infrastructure and more persistent reporting.

- Monitoring versus one-off audits: Some site audit software is best for ad hoc investigations. Other platforms are built for continuous SEO monitoring tools workflows, alerts, and team visibility.

- Data sources beyond crawling: For larger sites, crawl data alone is not enough. Search Console integration, page speed diagnostics, and log file analysis SEO workflows can change what is worth fixing first.

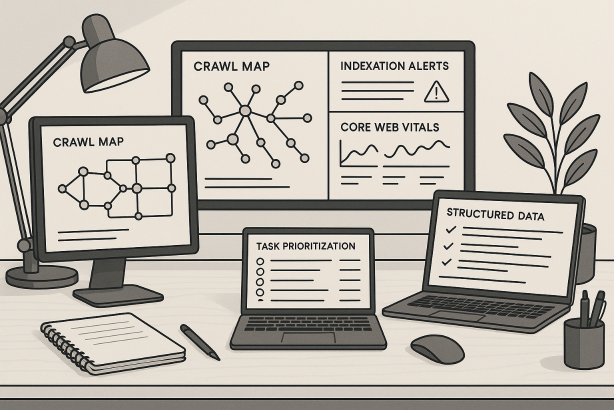

- Collaboration and reporting: A consultant can work from exports. A multi-person team usually needs saved projects, clearer prioritization, stakeholder-friendly reporting, and easier handoff to developers.

- Total cost of ownership: Budget is not just subscription price. It also includes training time, setup effort, reporting overhead, and whether your team can realistically turn outputs into action.

One more buying principle matters: not every tool is trying to win the same category. A desktop crawler, an all-in-one SEO suite, and an enterprise crawler may all offer site audits, but they are solving different operational problems.

Technical SEO tools comparison table

| Tool | Best fit | What it does well | Main trade-off |

|---|---|---|---|

| Screaming Frog | Consultants, specialists, hands-on in-house teams | Flexible crawling, strong exports, excellent for deep troubleshooting | Desktop workflow can feel technical and less collaborative |

| Sitebulb | Teams that want clearer visuals and guided audits | Accessible analysis, prioritization, easier storytelling for non-specialists | May feel less raw and less customizable for power users |

| Semrush Site Audit | Marketing teams already using a broader SEO suite | Convenient reporting inside an established platform | Not always the first choice for forensic technical analysis |

| Ahrefs Site Audit | Teams already centered on Ahrefs for research and links | Useful technical layer inside a familiar SEO workflow | Best as part of a suite, not always as the only technical stack |

| Lumar | Enterprise sites with scale and governance needs | Cloud-based crawling, enterprise workflows, broad visibility | Longer buying cycle and heavier commercial commitment |

| JetOctopus or OnCrawl | Large sites that need crawl plus logs | Strong crawl analysis tools for large inventories and log-based insights | Requires technical maturity and access to server data |

| Google Search Console plus PageSpeed Insights and Chrome DevTools | Every team | First-party indexing and performance signals, essential validation layer | Not a substitute for a full crawler or broader workflow management |

Case-study scenarios for choosing technical SEO tools

Scenario 1: A lean in-house team managing a small or mid-sized site

If you have a relatively contained site and one person owns SEO, simplicity matters more than breadth. In this scenario, a desktop crawler such as Screaming Frog or Sitebulb paired with Google Search Console is often enough to cover the essentials. You can identify indexability issues, broken links, redirects, duplicate patterns, metadata gaps, and internal linking problems without paying for enterprise infrastructure you will not use.

The decision here usually comes down to working style. If you want maximum control and you are comfortable digging through exports, Screaming Frog is often the sharper instrument. If you want faster interpretation and cleaner presentation for internal stakeholders, Sitebulb can be easier to operationalize.

Scenario 2: An agency handling multiple clients with different technical maturity levels

Agencies need two things at once: depth for specialists and clarity for clients. That often pushes the stack toward a specialist crawler plus a broader platform such as Semrush or Ahrefs for reporting continuity across accounts. The specialist tool does the heavy technical work. The suite helps connect technical findings with rankings, keywords, backlinks, and ongoing account management.

In agency environments, it is rarely smart to choose based on crawl features alone. You should also weigh seat structure, export flexibility, white-label needs, how easily issues can be explained to non-technical clients, and whether account managers can access outputs without needing a technical walkthrough every time.

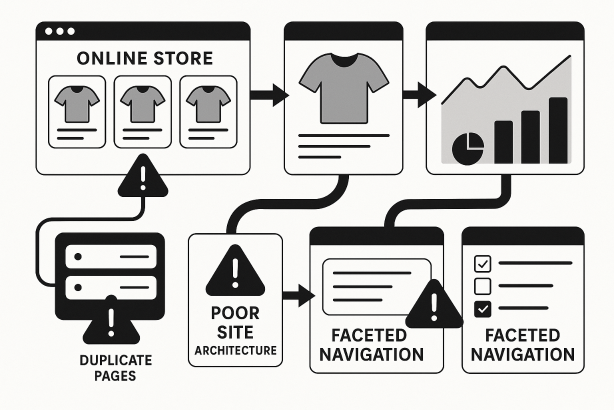

Scenario 3: A publisher or ecommerce brand with thousands of pages and templates

At larger scale, the question changes from Can the tool crawl the site? to Can the team prioritize patterns fast enough to act? This is where cloud-based site audit software and stronger segmentation become valuable. Large sites need to group findings by directory, template, content type, or business importance. They also need crawl scheduling and historical comparison so recurring problems can be spotted before they become revenue issues.

For these teams, enterprise-style platforms or log-aware tools start to justify their cost. A deep crawler can tell you what exists. Logs and trend views help you understand what search engines actually request, ignore, or revisit. That distinction becomes more important as the page count grows.

Scenario 4: An enterprise team with engineering, product, and governance requirements

Enterprise buyers are not just purchasing technical SEO tools. They are buying process support. The chosen platform may need to serve SEO, engineering, content, analytics, and leadership at the same time. Saved views, user permissions, recurring reporting, issue tracking, and consistent documentation matter as much as raw crawl capability.

In this case, products like Lumar or log-oriented platforms such as JetOctopus and OnCrawl become more relevant. Not because smaller tools are weak, but because large organizations need continuity, scale, and a system that supports repeated collaboration. If multiple teams need access to the same diagnostic framework, spreadsheets and desktop files become a bottleneck.

Tool-by-tool breakdown of leading technical SEO tools

Screaming Frog

Screaming Frog remains one of the most practical choices for hands-on technical work. It is especially strong when you want control over crawl settings, custom extraction, redirect analysis, canonical review, and fast exports for deeper analysis elsewhere. For consultants and experienced specialists, it is often the benchmark website crawler because it rewards precision.

Its trade-off is operational rather than technical. Less experienced stakeholders may find the interface dense, and desktop-based work is not always ideal for collaborative environments.

Sitebulb

Sitebulb is often the easier recommendation for teams that need interpretation as much as raw data. It tends to present findings in a more guided way, which can shorten the path from crawl output to action. That makes it a strong option for in-house marketers, smaller agencies, and teams that want technical insight without building every analysis from scratch.

The compromise is that highly advanced users may still prefer a more granular workflow for custom technical investigations.

Semrush Site Audit

Semrush makes sense when technical SEO is only one part of a broader search operation. If your team already uses the platform for keyword research, rank tracking, and competitive analysis, keeping technical auditing in the same environment can reduce friction. It is convenient, shareable, and easier for non-specialist marketers to navigate.

However, convenience is not the same as specialist depth. For advanced diagnosis, many teams still pair it with a dedicated crawler.

Ahrefs Site Audit

Ahrefs is a similar commercial decision in a different ecosystem. If your workflow already lives inside Ahrefs, its technical layer is useful because it sits next to the link, content, and research data your team is already reviewing. That helps with context and keeps reporting consolidated.

Where buyers should stay realistic is in assuming suite convenience replaces specialist technical analysis. For some teams it does. For deeper troubleshooting, it often does not.

Lumar

Lumar is aimed at organizations where technical SEO is a recurring cross-team function rather than an occasional audit task. It is better evaluated as an operational platform than as a simple crawler. That means the buying conversation should include governance, workflows, segmentation, scalability, and the ability to keep teams aligned over time.

The obvious trade-off is commercial weight. Enterprise platforms require more deliberate evaluation, stronger internal adoption, and a clearer business case.

JetOctopus and OnCrawl

These tools become especially relevant when crawl data alone is not enough. For large sites, log file analysis SEO can reveal which sections are getting attention from search engines, where crawl budget may be wasted, and whether important content is being revisited as expected. That is valuable for publishers, marketplaces, and ecommerce sites with large inventories and frequent change.

The catch is that these platforms are most useful when you have the technical access and internal knowledge to work with log data properly.

Google Search Console, PageSpeed Insights, and Chrome DevTools

No comparison of technical SEO tools is complete without the Google layer. Search Console is essential for understanding coverage, indexing signals, search performance, and issue validation. PageSpeed Insights and Chrome DevTools remain core Core Web Vitals tools for page-level investigation and front-end debugging.

Still, these products should be treated as critical inputs, not a complete stack. They validate and enrich your analysis, but they do not replace systematic crawling, prioritization, or ongoing technical monitoring.

How to build a practical technical SEO tools stack

For most businesses, the smartest purchase is not one platform but a combination that avoids blind spots.

- Small sites: one specialist crawler plus Google tools.

- Agencies: one specialist crawler plus one broader suite for client reporting and research.

- Large publishers and ecommerce: cloud crawling plus Google tools, with logs when crawl efficiency and indexation become persistent concerns.

- Enterprise teams: a platform that supports recurring monitoring, permissions, shared views, and cross-team workflows.

This is also where many buyers overspend. If a simple stack covers your actual decisions, an enterprise platform may add complexity without adding enough value. On the other hand, if your team is spending hours every month stitching together exports from disconnected systems, a more robust platform may be cheaper than it first appears.

Common buying mistakes to avoid

- Buying for feature breadth instead of workflow fit: More checkboxes do not guarantee better execution.

- Confusing dashboards with diagnosis: Health scores are useful, but they should never replace page-level investigation.

- Ignoring collaboration needs: If developers, content teams, or clients cannot use the output, the tool will underperform.

- Skipping first-party validation: Crawl findings should be tested against Search Console and performance data.

- Underestimating scale: A tool that works for 5,000 URLs may not be the right answer for 5 million.

A practical next step if you are comparing vendors

If you are actively reviewing technical SEO tools, add Rabbit SEO to your shortlist and compare it against the criteria that matter most for your team: visibility into site health, issue prioritization, reporting clarity, and how easily the platform fits your day-to-day workflow. A side-by-side evaluation against your current setup will tell you far more than a generic feature grid.

Final verdict on technical SEO tools

The best technical SEO tools are the ones that match your operating reality. Screaming Frog and Sitebulb remain strong choices for focused technical work. Semrush and Ahrefs are sensible when technical auditing needs to live inside a broader SEO suite. Lumar, JetOctopus, and OnCrawl make more sense as scale, collaboration, and log-based analysis become central. And Google Search Console, PageSpeed Insights, and DevTools remain essential no matter what else you buy.

If you choose with workflow, scale, and stakeholders in mind, your technical stack becomes simpler and more effective. If you choose only by feature lists, you risk paying for overlap, complexity, and reports no one acts on. In commercial terms, the right decision is not the tool with the longest checklist. It is the one that helps your team find issues, prioritize correctly, and ship fixes consistently.